January 2026

The Rise of Technopoly

Humans have always utilised technology to some extent. In his 1993 book, Technopoly, Neil Postman categorises the relationship between humans and technology into three types: tool- using cultures, where technology is used as mere means to an end; technocracies, where technique becomes more important than consequences; and technopolies, where technology supersedes and drives human culture to fit the broader societal machine’s needs.

Postman’s perspective on the third stage mirrors Marshall McLuhan’s famous phrase from his 1964 book, Understanding Media: The Extensions of Man, which states that “the medium is the message.” This suggests that a medium’s impact is its true message, not its content. McLuhan also noted that people are always largely unprepared to encounter new technologies, like television; “the native of Ghana is unprepared for literacy that separates them from their tribal world”. This similarly applies to all later technology; while content can remain the same, each medium changes how individuals act and interact, influencing their culture and placement in their environment.

An example of this can be adapted from Ivan Illich’s critiques of cars in Tools for Conviviality (1973) for creating a “radical monopoly”: a technology meant to connect people across distance instead disperses communities, reshapes cities around its needs, and builds dependence, gradually leaving those without cars excluded. Communicative media extends this pattern, bridging distances virtually while eroding local bonds; users, like bees pollinating flowers (Butler S., 1872), unwittingly propagate the system's growth into global ‘network states’ (Srinivasan, 2022) over what would’ve traditionally been geographically gathered locales and organically interacting communities.

Our gradual integration into our technology in such a way has led to what McLuhan termed “cybernation”; our nervous systems and consciousness merge with man-made “electrical society” as a unified symbiosis between us and our environments. We’ve already merged to an extent, as seen in the personal computer and smartphone, where we use them similarly to the ‘exocortex’ from Charles Stross’ 2004 story Accelerando; extending our minds and capacities like memory, processing, or navigation, relying on technology as an external appendage. While this can still be considered tool-use and indeed be very helpful, as our devices become integrated into us and become smarter with increasing capabilities of autonomous action, they, like our organs, may begin to steer us just as much as we delude ourselves into thinking that we wield and control them.

Google is an example actor in this, openly aiming to “organise the world’s information”. While this may seem noble, its AdSense tool collects as much “exhaust data” as possible from its technological stack, including hardware (Pixel, Chromebook), software (Android, Chrome, Maps, etc.), and even content (YouTube, Google search, AI engagement). “Exhaust data” here refers to metadata (data about data, not contents, but the location, timestamp, device identifiers, etc.) that is then used to fuel “surveillance capitalism”. In such system, users become collations of data points to be sold to the highest bidder for targeted advertising, optimising likelihood of engagement and purchase (Zuboff, S., 2019).

Meta’s algorithmic suggestion feeds work similarly, siphoning a user’s social network, locations, inferred interests, and personality to match content to perfect audiences. While this can be justified as empowering connection, documentaries like The Social Dilemma (2019) reveal that such services are intentionally designed them to keep users engaged for as long as possible. Many involved often express regret that the business model never truly aligned with providing users with a more fulfilled life, despite naive aims. Dark patterns, such as infinite scrolling, persuasive dialogue, and intentional menu placement, have been increasingly baked into services to psychologically reinforce desired habits, retain usage, stimulating addiction and compulsive behaviour, even with weak user control tools like time limits (Seyson, S. & Willett, W., 2025).

Figure 1 - Instagram’s navigation bar is intentionally designed for quick access to Reels on the bottom right near to where the thumb rests if right handed, and users have no control over its positioning. [Digital Information World, 2023]

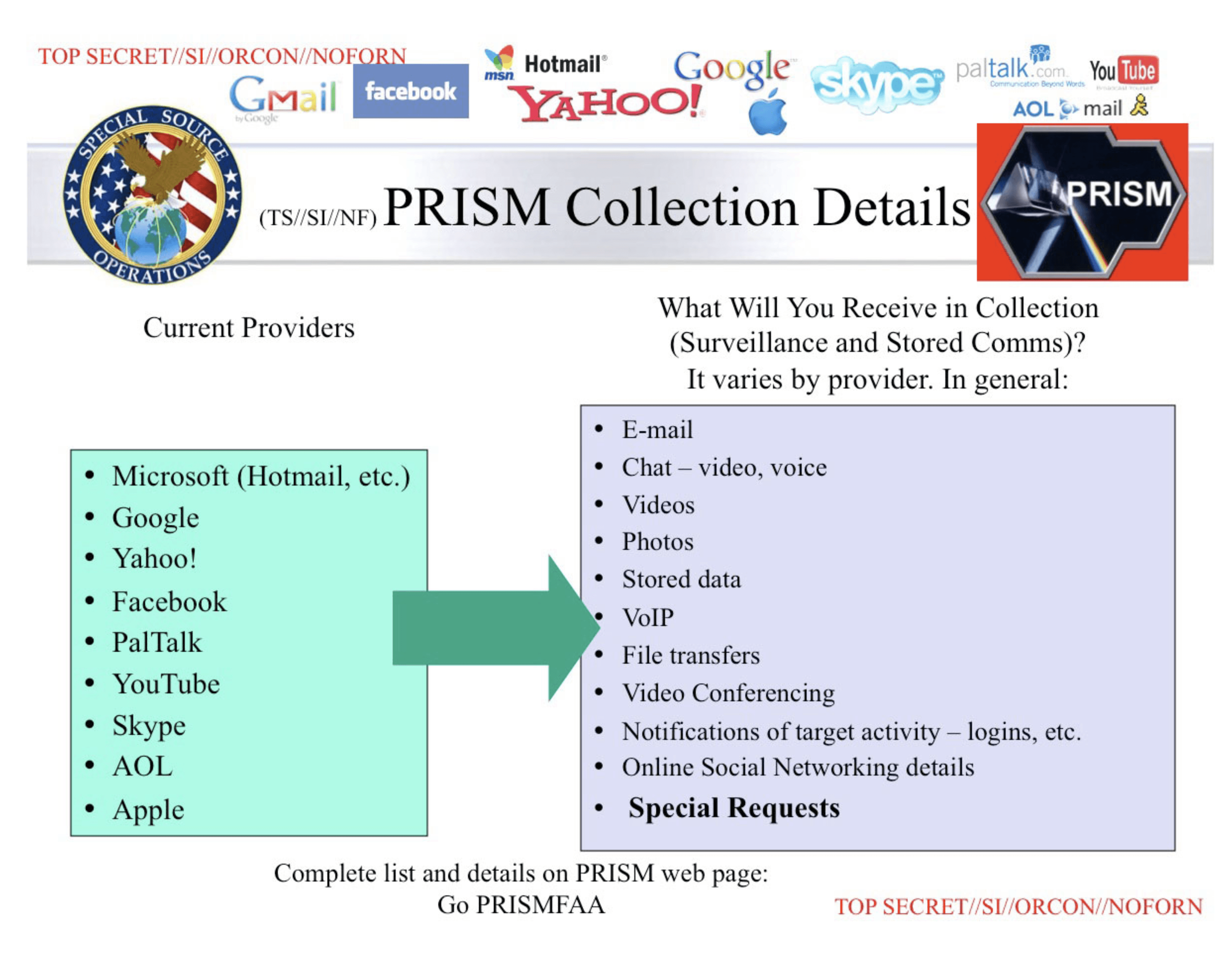

Alongside profit motivations, deeply intimate data is often also utilised for (debatably) even worse harmful purposes. In 2013, Edward Snowden’s NSA documents revealed that tech corporations frequently collaborate with governments to provide intelligence data on users, both internationally and domestically, under guise of ‘national security’ or ‘national interests’, often obtained without warrant or transparency.

Data is often taken indiscriminately via dragnets to gain as large a total picture as possible, to the point of leading to “bulk data failures” from the sheer volume of hoarding (in orders of exabytes, thousands of terabytes) which cannot be processed quickly enough (Whittaker, Z., 2016). Both Google and Meta, for instance, are alleged to even have received initial funding from agency investment fronts like In-Q-Tel (Ahmed, N., 2015) due to shared interests in social graphing, mapping, or data collection efficiency aligning to government goals of ‘Total Information Awareness’ (Hill, G., 2024).

Figure 2 - The PRISM collection program revealed deep corroboration between Western government agencies and Big Tech corporations for accessing intelligence data in almost all mediums/services [The Guardian, 2013]

As McLuhan also warned, “Every new technology requires a new war” (1968); a view echoed in his 1970 statement that “World War III is a guerrilla information war with no division between military and civilian participation”. Contemporary thinking, such as François du Cluzel’s 2021 NATO-sponsored exploration into cognitive warfare, suggest a dynamic is already unfolding: extensive personal data enables highly targeted social engineering, nudging, and narrative manipulation which can be used to destabilise societies and individuals without any traditional combat. Our technological landscape resembles a highly volatile cognitive domain, raising profound questions about user autonomy amid increasingly immersive, always-active, and personalised technologies which can influence people in ways they likely struggle to anticipate.

The Cambridge Analytica scandal of the 2010s perfectly highlights such issues in practice. Using Facebook data, the group targeted and manipulated voters with political posts during the 2016 US election and Brexit referendums, influencing opinions without knowledge or consent. This has had a lasting impact on Western society, with irreversible cultural, economic, and social consequences. Some could argue that democracy can no longer be trusted to produce a consensus due to algorithms that push people’s beliefs to short-sighted emotional populism (Zarrelli P., 2025). Zuboff states, “It’s no longer enough to automate information flows about us; the goal now is to automate us,” which raises profound concerns about the future of us and society.

Even without considering human biases and influence campaigns through legacy media, Postman’s technopoly is already here - “A technocracy does not have as its aim a grand reductionism in which human life must find its meaning in machinery and technique. Technopoly does.” How can we truly have free will if everything we see is highly personalised by algorithms on overwhelming echo-chambers, mixed with AI ‘slop’ media and botnets fuelling a ‘dead internet’ of fake users manufactured to guide us to synthesised consensus?

Whilst it’s easy to dismiss this as conspiracy theory, laws like the 2001 Patriot Act in the US and the 2016 Investigatory Powers Act in the UK show how legal access to user information is achieved without transparency. The ‘data economy’ and its powers value information for manipulation, using technology to achieve this. Pushed towards digital activities, we don’t critique the potential manipulation achieved through our usage and increasing dependence on them, and how there is a designed element in how it affects us.

Naturally, this leads to users feeling exploited. Every action is likely logged in an NSA mass database in Utah, searchable by some tech worker behind closed doors. Unless you live in the woods as a hermit covered in tin foil, you can’t realistically stop using emails for work, phones for staying in contact with friends and family, or the Internet for accessing information without major inconvenience. This issue likely seems unfixable due to the sheer scale affecting so much everywhere all at once. Yet, perhaps more crucially, knowing we cannot undo the past; what are issues that may happen next and how can we work towards fixing them?

The Future

If asked about Silicon Valley's agenda, critics such may accuse leaders such as Mark Zuckerberg (Meta), Elon Musk (Tesla, SpaceX, X), Eric Schmidt (ex-Google), or Larry Ellison (Oracle) as ‘cyborg theocrats’ pursuing a singularity where technology culminates in an AI superintelligence ‘god’ (Allewelt, B., 2025), inevitably prophesied to surpass human cognition and aid us in surpassing biology with tools for transcendence (Kurzweil, R., 2005). Interim steps are cognitive enhancers like smartphones, followed by worn and embodied devices such as smartwatches, VR (virtual reality) headsets, and smart glasses, the latter two forming XR/MR (extended/mixed reality) (Milgram & Kishino, 1994), before leading to any truly Strossian cognitively-integrated exocortex for directly augmented data immersion.

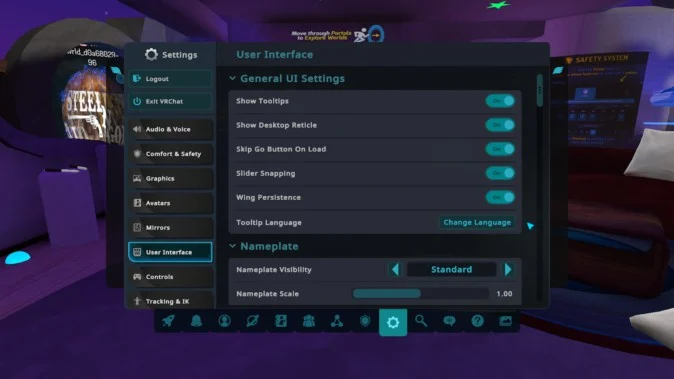

XR is a technology in development since the 1980s, and has gained niche but mainstream awareness since the early 2010s. It has evolved from PC-dependent headsets like the original Oculus Rift and Valve Index towards thinner glasses, starting with the controversial Google Glass of the 2010s and more refined present iterations like the Meta Ray-Ban Display glasses of 2025. Whilst users already merge with smartphones, a problematic though disembodied integration, XR devices can offer even more effortless user experiences through inputs such as hand gesture or eye-tracking selection. The naturalness afforded by embodied input feels like a higher-fidelity extension of one’s mind due to the reflexiveness of thumb microgestures or eye tracking rendering even touchscreen interfaces cumbersome and slow; latency can be far lower with, say, the latter, as people don’t usually think to consciously aim and look at an interface target; they just do it.

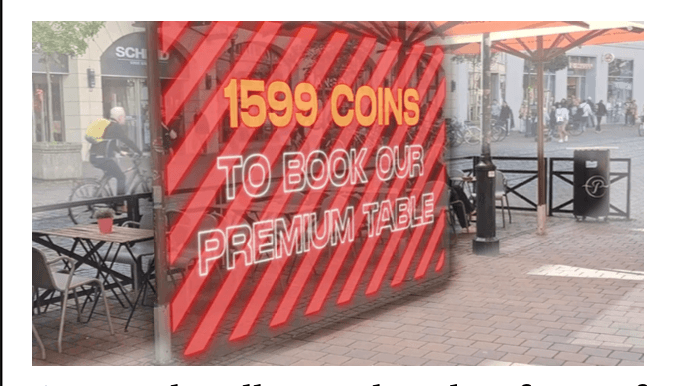

But despite usual futuristic promises of connectivity, convenience and productivity, XR’s potential to threaten privacy, autonomy, and wellbeing remains along with much of the previously mentioned issues of surveillance or manipulation. XR has already been predicted to be likely to utilise even more intimate dark patterns to manipulate users; speculation suggests always- enabled cameras and linked displays could be used to virtually block access to environmental spaces, overlay personalised ads, or instigate negative virtual emotional stimuli, for example (Meinhardt et al., 2025). The ability to alter reality towards ends becomes limitless in an extended one.

Given this knowledge, it’s crucial we consider this before widely integrating it into society so to avoid problems and changes that may not benefit us. With such technology, how can we, say, prevent reflexive eye movements from being logged, targeted with ads based on this data, or ensure any privacy when cameras and microphones are constantly attached to ourselves and others?

Similar critics who also forecast this on the horizon, like Tristan Harris and James Poulos, have attempted to testify before the U.S. Congress about dangers of algorithms and persuasive technology as well as future trajectories about this developing evolution, warning that the cyborg theocrats’ belief that technology will inevitably surpass humanity is greatly harming and will harm us, democracy, and our cultures in the process. These expanding trajectories have largely not been held legally or democratically accountable, as legislation lags behind such rapid technological advancements. Few are articulate enough to even accurately express concerns; societal discourse often focuses on trivial and lobbied implications over truthfully underlying technological and evolutionary risks, which can be hard to ratify due to their depth and complexity.

However, conveniently, a lot seemingly terrible and dangerous ideas have already been accurately speculated in fictional media. For instance, the show Black Mirror has often prophetically warned about XR issues regarding autonomy, external threat actors, and by-design control through episodes like Entire History of You (2011) or Men Against Fire (2016), both of which will be discussed more extensively as case studies later on. Fiction's speculative predictions often are more successful due to being able to embedding devices in temporary and plausible ‘what if' worlds, visualising problems through characters' direct, contextually clear interactions.

This process, termed “design fiction,” was first used by Bruce Sterling in his 2005 manifesto Shaping Things, where he traced object evolution from handmade artefacts to machined objects, then gizmos, and proposed “spimes” as the next stage: programmable, trackable objects with digital identities, fabricated on-demand, recyclable, and data-integrated, characteristic of the ‘Internet of Things.’ Sterling used this to critique consumerism and advocate designer involvement in sustainable, ubiquitous futures. However, it was in his later 2009 article for Interactions magazine, titled Design Fiction, where he elaborated to define the term as “the deliberate use of diegetic prototypes to suspend disbelief about change.”

The term ‘diegetic prototype’ is derived from film scholar David A. Kirby, who introduced it in his 2009 paper, Diegetic Prototypes and the Role of the Film Genre in Future Technologies, to describe fictional technology embedded in a story’s world as an influence for real technological innovation. Kirby argues that technological progress is not linear or inevitable, but is shaped by social, economic, and cultural barriers such as public scepticism or funding gaps. Fiction through mediums such as films, meanwhile, uses speculative technologies as fully realised interactions within a story's narrative, making them feel viable and thus sparking real-world desire, investment, and innovation.

Unlike traditional prototypes, diegetic ones are hypothetical and can perform any mock functionality through plot, dialogue, and character interactions; their focus is on normalising the tech as an everyday artefact. Often, he argues, this technique is also used to stimulate desire or make viewers comfortable with ideas about what may be in the works ahead of true viability.

This idea of design fiction is built on further by Julian Bleecker in his 2009 essay Design Fiction: A Short Essay on Design, Science, Fact, and Fiction, where he defines the concept as a blend of the three used to explore how technology within fictional case studies shapes life, ideally to provoke thought on a future’s implications without needing the full functionality of a prototype or letting it come to pass. One case study Bleecker focuses on is the gesture interface in Steven Spielberg’s 2002 Minority Report, designed by John Underkoffler to fit the narrative as a believable way to interact with a future computer.

Figure 3 - Minority Report (2002)’s hand-gestural computer interface is a widely cited example of a design fiction artefact, and it has had a significant impact on XR’s development [Slashfilm, 2010]

Figure 4 - VRChat, the most similar parallel to Stevenson’s Metaverse, is navigated via flat UI panels to smaller isolated worlds rather than truly spatially via a literal street/metro system in order to reduce latency [Mogura VR News, 2023]

Figure 5 - Meta’s sub-brands form a comprehensive ecosystem of existing users, and high capacity to shape the next stage of technology via XR [agenciaBrazil, 2025]

Figure 6 - ‘Snow’ on a TV as a result of electric static noise is where the term “Snow Crash” originates [Mysid, 2006]

Figure 7 - A Sumerian writing tablet with pictographic pre-Cuniform script, currently held in the Louvre [Mbzt, 2013]

Figure 8 - Example of an epilepsy pre-warning used on a YouTube video [Mark Robotham, 2014]

Figure 9 - Liam with his eyes ‘activated’ whilst replaying a memory [The Mirror, 2018]

Figure 10 - Liam at airport security having his last 48 hours of memory reviewed [IMDB, 2011]

Figure 11 - Liam is warned by his Grain against driving whilst drunk when it recognises the two activities in sequence of one another [FilmAffinity, 2025]

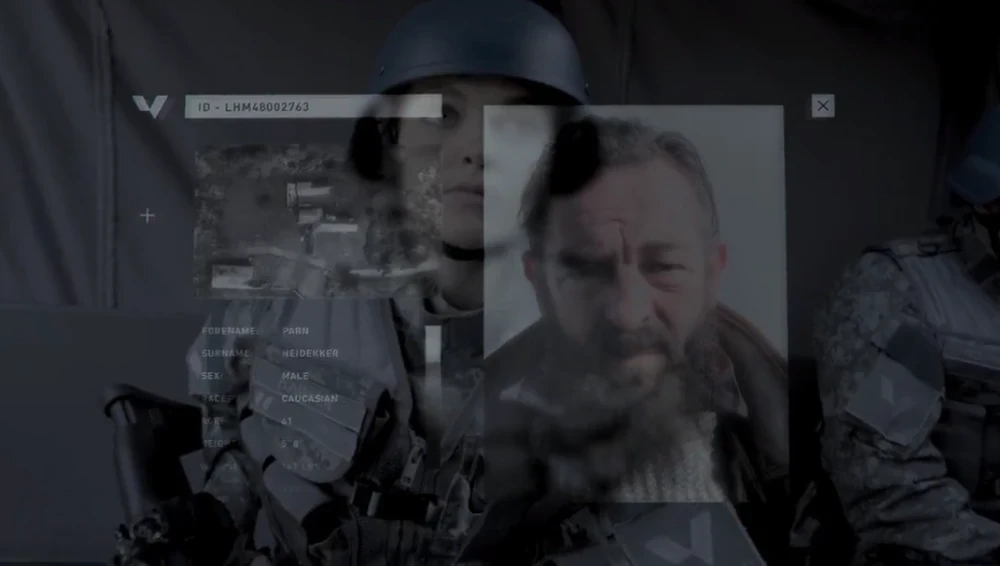

Figure 12 - Stripe’s MASS implant point of view showing him intel on his squadron’s target before an operation [Black Mirror Fandom, 2025]

Figure 13 - Stripe is shown a video of himself agreeing to the Mass implant - yet, given modern AI developments, one could argue it could also have been deepfaked as an further way to manipulate him... [TV Obsessive, 2018]

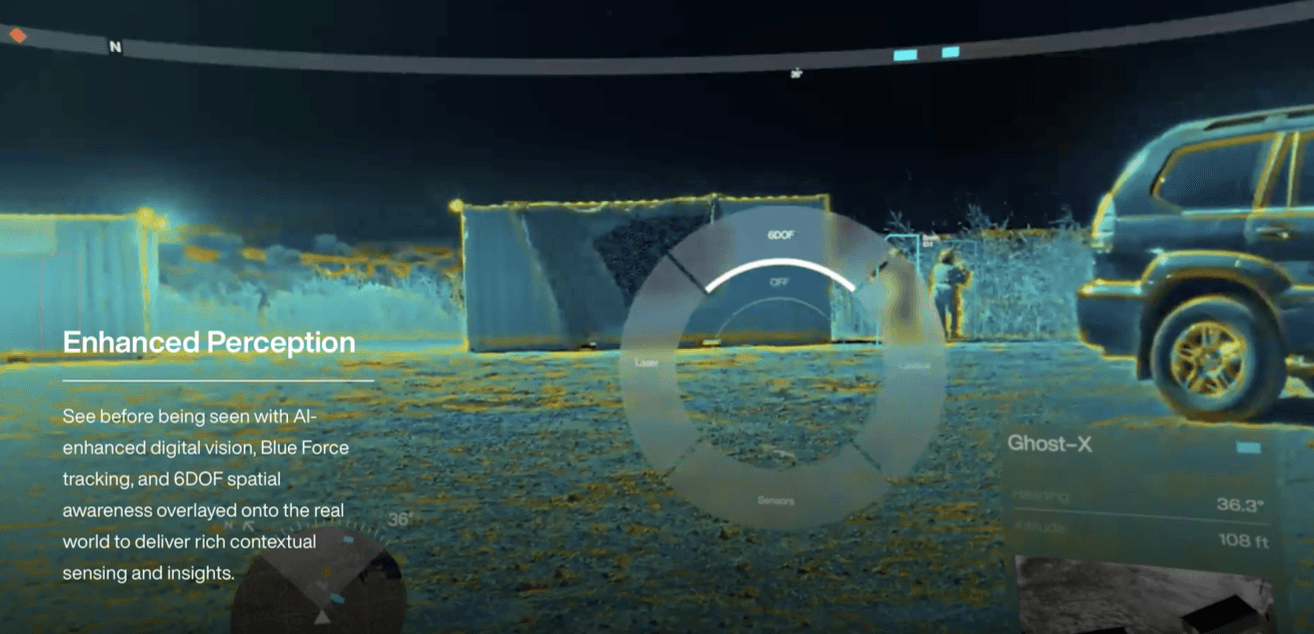

Figure 14 - Thermal vision to see through walls with data overlays on the EagleEye military helmet [Anduril, 2025]

Figure 15 - Mock up of a potential dark pattern in XR design, where a restaurant is blocked with virtual elements to promote a paid experience, ruining the environment’s real ambience [Meinhardt, 2025]

Figure 16 - The HUD of the Kiroshi Optics is also the game's HUD [Game UI Database, 2020]

Figure 17 - The EvenReality G2 glasses provide a functionally similar HUD experience, connected to one’s smartphone via Bluetooth instead of a ‘cyberdeck’ [EvenReality, 2025]

Figure 18 - One can hack others around them using their Kiroshis via quickhacks on a list menu, and also scan them for additional information [Game UI Database, 2020]

Figure 19 - Adam Smasher has his own branded “Smasher ICE”, suggesting those technical enough could develop personalised defence measures to prevent and counter general-level attacks [carbonatedshark55, 2024]

References

Images

Anwar, H. (2023) Instagram revamps its user interface with minor tweaks to layout and removal of shop tab from homescreen - https://www.digitalinformationworld.com/2023/01/ instagram-revamps-its-user-interface.html

The Guardian (2013) NSA Prism program slides - https://www.theguardian.com/ world/interactive/2013/nov/01/prism-slides-nsa-document

Sciretta, P. (2010) VOTD: Real life Minority Report user interface demonstration - https://www.slashfilm.com/509384/votd-real-life-minority-report-user-interface- demonstration/

MoguLive (2023) 「VRChat」β版アプデでUIが一部日本語化。アイトラッキングにも対応開始, - https://www.moguravr.com/vrchat-39/

Thereso, P. (2025) Audiência pública discute desinformação e direitos nas redes -https://agenciabrasil.ebc.com.br/es/node/1627238

Mysid (2006) TV noise.jpg - https://commons.wikimedia.org/wiki/File:TV_noise.jpg

Rama (n.d.) P1150884 Louvre Uruk III tablette écriture précunéiforme AO19936 rwk.jpg - https://en.wikipedia.org/wiki/Sumer#/media/File:P1150884_Louvre_Uruk_III_tablette_écriture_précunéiforme_AO19936_rwk.jpg

Robotham, M. (2016) 136.10Hz - Energy Balance - Epilepsy Warning - https://www.youtube.com/watch?v=04CYcxQQ0tQ

Mirror (2018) The Entire History of You ends Black Mirror's first season in a hell where memories aren't private - https://www.mirror.co.uk/tv/tv-news/blackmirror- thirdepisode-theentirehistoryofyou-12260563

IMDb (n.d.) Still from Black Mirror: The Entire History of You - https://www.imdb.com/title/tt2089050/mediaviewer/rm3090830848/?ref_=ttmi_mi_1_1

FilmAffinity (n.d.) Image from Black Mirror: The Entire History of You (2011) - https://www.filmaffinity.com/us/movieimage.php?imageId=728384824

Black Mirror Wiki (n.d.) MASS - https://black-mirror.fandom.com/wiki/MASS

Crain, C. (2018) Black Mirror: “Men Against Fire” recap and analysis - https://tvobsessive.com/2018/04/30/black-mirror-men-against-fire/

Anduril Industries (2025) EagleEye - https://www.anduril.com/eagleeye

Meinhardt, L. et al. (2025) 'Mind games! Exploring the impact of dark patterns in mixed reality scenarios' - doi:10.1145/3743709

Game UI Database (2020) Cyberpunk 2077 - https://www.gameuidatabase.com/ gameData.php?id=439

Even Realities (2025) Smart glasses with display & ambient AI prompts | Even G2 - https://www.evenrealities.com/smart-glasses

Game UI Database (2020) Cyberpunk 2077 - https://www.gameuidatabase.com/ gameData.php?id=439

carbonatedshark55 (2024) I didn't realize Adam Smasher had anti-netrunner cyberware called Smasher ICE - https://www.reddit.com/r/cyberpunkgame/comments/ 1dc23lt/i_didnt_realize_adam_smasher_had_antinetrunner/

Research

21e8 (2022) 21e8 The Magic Number Company January 2022 pitchdeck - https://drive.google.com/file/d/1eSqtKqRROtGmVcmDm3pPe37djiHMUZrd/view?pli=1

Ahmed, N. (2015) How the CIA made Google - https://medium.com/@NafeezAhmed/how-the-cia-made-google-e836451a959e

'AI slop' (2026) Wikipedia - https://en.wikipedia.org/wiki/AI_slop

Allewelt, B. (2025) Cyborg theocracy - https://narrascaping.substack.com/p/cyborg-theocracy

Amazon (2025) Amazon’s delivery glasses: the newest innovation designed to enhance the delivery experience - https://www.aboutamazon.com/news/transportation/smart- glasses-amazon-delivery-drivers

Anduril (2025) Anduril’s EagleEye puts mission command and AI directly into the warfighter’s helmet - https://www.anduril.com/news/anduril-s-eagleeye-puts-mission-command-and-ai-directly-into-the-warfighter-s-helmet

Anzolin, E. & Lo Nostro, G. (2025) Ray-Ban Meta glasses take off but face privacy and competition test - https://www.reuters.com/sustainability/boards-policy-regulation/ray-ban-meta-glasses-take-off-face-privacy-competition-test-2025-12-09/

Apple (2025) Vision Pro - tech specs - https://www.apple.com/apple-vision-pro/specs/

Baldry, M. et al. (2024) 'From embodied abuse to mass disruption: generative, inter-reality threats in social, mixed-reality platforms' - doi:10.1145/3696015

Ball, M. (2024) Interviewing Epic Games Founder/CEO Tim Sweeney and Author/Entrepreneur Neal Stephenson - https://www.matthewball.co/all/sweeneystephenson

BBC (2021) Apparently, it's the next big thing. What is the metaverse? - https://www.bbc.co.uk/news/technology-58749529

BBC (2024) Ex-Israeli agents reveal how pager attacks were carried out - https://www.bbc.co.uk/news/articles/cwy3l02wxqdo

BibleGateway (2025) Holy Bible (NIV) - https://www.biblegateway.com/passage/?search=Revelation%2013&version=NIV

Bleecker, J. (2009) Design fiction: a short essay on design, science, fact and fiction. Near Future Laboratory - https://systemsorienteddesign.net/wp-content/uploads/2011/01/DesignFiction_WebEdition.pdf

Brooker, C. (2011) ‘The entire history of you’, Black Mirror, series 1, episode 3

Brooker, C. (2016) ‘Men against fire’, Black Mirror, series 3, episode 5

Butler, S. (1872) 'The book of the machines' - https://zyg.edith.reisen/k/artifact/book_of_machines

CD Projekt Red (2020) Cyberpunk 2077

Center for Humane Technology (2019) Tristan Harris Congress testimony: understanding the use of persuasive technology - https://www.youtube.com/watch?v=ZRrguMdzXBw

CERN (2025) The birth of the Web - https://home.cern/science/computing/birth-web

Chomsky, N. (1957) Syntactic structures

Darktrace (2025) Cyber AI Analyst - https://www.darktrace.com/cyber-ai-analyst

Dawkins, R. (1976) The selfish gene

'Dead Internet theory' (2026) Wikipedia. Available at: https://en.wikipedia.org/wiki/Dead_Internet_theory

'Driver drowsiness detection' (2025) Wikipedia - https://en.wikipedia.org/wiki/Driver_drowsiness_detection

Du Cluzel, F. (2020) Cognitive warfare - https://innovationhub-act.org/wp-content/uploads/2023/12/20210113_CW-Final-v2-.pdf

Dunne, A. and Raby, F. (2013) Speculative everything: design, fiction, and social dreaming.

Enard, W. et al. (2002) 'Molecular evolution of FOXP2, a gene involved in speech and

'ePassport gates' (2025) Wikipedia - https://en.wikipedia.org/wiki/EPassport_gates

Even Realities (2025) Even G2 - https://www.evenrealities.com/smart-glasses

Féval, C. (2024) Enshittification is a feature, not a bug - https://fev.al/posts/enshittification/

'Fifth-generation warfare' (2025) Wikipedia - https://en.wikipedia.org/wiki/Fifth-generation_warfare

Fight Chat Control (2026) Overview - https://fightchatcontrol.eu/#overview

Foster, N. (2013) The future mundane - https://www.core77.com/posts/25678/the-future-mundane-25678

GeeksforGeeks (2025) Difference between Web 1.0, Web 2.0, and Web 3.0. -https://www.geeksforgeeks.org/blogs/web-1-0-web-2-0-and-web-3-0-with-their-difference/

Gibson, W. (1984) Neuromancer

'Global cultural flows' (2025) Wikipedia - https://en.wikipedia.org/wiki/Global_cultural_flows#Mediascape

Goldenberg, A. and Finkelstein, J. (2025) Cyber swarming, memetic warfare and viral insurgency - https://networkcontagion.us/wp-content/uploads/NCRI-White-Paper-Memetic-Warfare.pdf

Google (n.d.) ‘Organise the world’s information’ - https://about.google/company-info/

Hill, G. (2024) Facebook, a computing pioneer, a secret government program, and a strange coincidence - https://whyy.org/segments/facebook-a-computing-pioneer-a-secret-government-program-and-a-strange-coincidence/

How-To Geek (2023) How to use the ping command to test your network - https://www.howtogeek.com/355664/how-to-use-ping-to-test-your-network/

Illich, I. (1973) Tools for conviviality

Imaishi, H. (dir.) (2022) Cyberpunk: Edgerunners - https://www.netflix.com/title/81054853

Institute of Network Cultures (2021) Critical meme reader: global mutations of the viral image - https://networkcultures.org/blog/publication/critical-meme-reader-global-mutations-of-the-viral-image/

IPBA Connect (2025) How Apple’s IP strategy creates powerful lock-in effects in a digital ecosystem - https://profwurzer.com/how-apples-ip-strategy-creates-powerful-lock-in-effects-in-a-digital-ecosystem/

Jaynes, J. (1976) The origin of consciousness in the breakdown of the bicameral mind

Johnny Mnemonic (1995) Directed by R. Longo

Kirby, D.A. (2010) 'The future is now: diegetic prototypes and the role of popular films in generating real-world technological development' - https://www.researchgate.net/publication/249721702_The_Future_Is_Now_Diegetic_Prototypes_and_the_Role_of_Popular_Films_in_Generating_Real-World_Technological_Development

Kurzweil, R. (2006) The singularity is near: when humans transcend biology

Levy, K. (2014) A San Francisco bar banned Google Glass because it doesn't want patrons being secretly filmed - https://finance.yahoo.com/news/san-francisco-bar- decided-ban-204213419.html

Lindley, J. and Coulton, P. (2015) 'Back to the future: 10 years of design fiction' - doi:10.1145/2783446.2783592

Marcel, A.J. (1983) 'Conscious and unconscious perception: experiments on visual masking and word recognition'

Marr, B. (2021) The fascinating history and evolution of extended reality (XR) – covering AR, VR and MR - https://www.forbes.com/sites/bernardmarr/2021/05/17/the-fascinating-history-and-evolution-of-extended-reality-xr--covering-ar-vr-and-mr/

McLuhan, M. (1964) Understanding media: the extensions of man

McLuhan, M. (1968) War and peace in the global village

McLuhan, M. (1970) Culture is our business

Meinhardt, L. et al. (2025) 'Mind games! Exploring the impact of dark patterns in mixed reality scenarios' - doi:10.1145/3743709

Meta (2025) Meta Quest 3 - display and optics technology - https://www.meta.com/gb/quest/quest-3/

Meta (2025) Orion - the future of wearables - https://www.meta.com/en-gb/emerging-tech/orion/

Microsoft (2025) Microsoft Security Copilot. Available at: https://www.microsoft.com/en-gb/security/business/ai-machine-learning/microsoft-security-copilot

Milgram, P. and Kishino, F. (1994) 'A taxonomy of mixed reality visual displays' - https:// www.researchgate.net/publication/231514051_A_Taxonomy_of_Mixed_Reality_Visual_Displays

Minority Report (2002) Directed by S. Spielberg

Mori, S. et al. (2020) 'InpaintFusion: incremental RGB-D inpainting for 3D scenes' -https://ieeexplore.ieee.org/document/9184389

Naprys, E. (2025) View an ad and you’re cooked: Intellexa planted spyware with zero clicks - https://cybernews.com/security/intellexa-planted-spyware-with-zero-click-ads/

NoScript (2025) What is it? - https://noscript.net

Octra Labs (2024) Octra Network litepaper - https://octra.org/litepaper.pdf

Ore, J. (2018) Surfing the Net is old school. Soon, we may inhabit it - https://magazine.utoronto.ca/research-ideas/technology/surfing-the-net-is-old-school-soon-we-may-inhabit-it-janusvr-james-mccrae-jonathan-ore/

Orlowski, J. (dir.) (2020) The social dilemma

Pondsmith, M. (1990) Cyberpunk 2020

Postman, N. (1993) Technopoly: the surrender of culture to technology

Potter, M.C. et al. (2014) 'Detecting meaning in RSVP at 13 ms per picture'

Poulos, J. (2021) Human, forever: the digital politics of spiritual war

Poulos, J. (2021) Testimony to the US Senate Committee on Commerce, Science, and

Transportation, Subcommittee on Communications, Media, and Broadband: “Disrupting dangerous algorithms: addressing the harms of persuasive technology” - https://www.commerce.senate.gov/services/files/B38CCF21-4A40-4C56-BD40-79ED720B9F01

PowerfulJRE (2025) Joe Rogan Experience #2422 - Jensen Huang - https://youtube.com/watch?v=3htpKYix4x8

Proton (2024) What is pixel tracking? How to tell when emails are tracking you - https://proton.me/blog/pixel-tracking

Reiners, M.C.R. (2025) Knock knock: what to do when police arrive over online speech - https://reiners.org.uk/knock-knock-what-to-do-when-police-arrive-over-online-speech/

Resonite (2025) The future of virtual reality whitepaper - https://resonite.com/Whitepaper.pdf

Richter, F. (2025) AWS stays ahead as cloud market accelerates - https://www.statista.com/chart/18819/worldwide-market-share-of-leading-cloud-infrastructure-service-providers/

RightToCompute (2026) #RightToCompute - https://righttocompute.ai/

Seyson, S. and Wesley, W. (2025) 'Exploring the evolution of dark patterns and manipulative design on Instagram' - doi:10.1145/3706599.3719771

Snowden, E. (2019) Permanent record

Song, V. (2024) College students used Meta’s smart glasses to dox people in real time - https://www.theverge.com/2024/10/2/24260262/ray-ban-meta-smart-glasses-doxxing-privacy

Srinivasan, B. (2022) The network state: how to start a new country - https://thenetworkstate.com/

Sterling, B. (2005) Shaping things

Sterling, B. (2009) 'Design fiction' - doi:10.1145/1516016.1516021

Stephenson, N. (1992) Snow crash

Stross, C. (2005) Accelerando

@stspanho (2025) ‘I've been building an XR app for a real-world ad blocker using Snap

Spectacles...’ - https://x.com/stspanho/status/1935728608514838540

Terranova Security (2024) 9 examples of social engineering attacks - https://www.terranovasecurity.com/blog/examples-of-social-engineering-attacks

The Guardian (2013) NSA Prism program slides - https://www.theguardian.com/world/interactive/2013/nov/01/prism-slides-nsa-document

The Independent (2017) Google cofounder Sergey Brin says these 2 books changed his life - https://www.independent.co.uk/news/google-cofounder-sergey-brin-2-books-changed-life-advise-helpful-reading-a7686246.html

'Total Information Awareness' (2025) Wikipedia - https://en.wikipedia.org/wiki/Total_Information_Awareness

United Kingdom Parliament (2016) Investigatory Powers Act 2016 - https://www.legislation.gov.uk/ukpga/2016/25/contents

United Kingdom Parliament (2023) Online Safety Act 2023 - https://www.legislation.gov.uk/ukpga/2023/50/contents

United States Congress (2001) Uniting and Strengthening America by Providing Appropriate Tools Required to Intercept and Obstruct Terrorism (USA PATRIOT Act) Act of 2001, Public Law 107-56, 107th Congress - https://www.govinfo.gov/content/pkg/PLAW-107publ56/html/PLAW-107publ56.htm

Urbit (2025) Urbit explained - https://urbit.org/overview/urbit-explained

U.S. Department of War (2025) 12 military innovations that are now everyday parts of society - https://www.war.gov/News/Feature-Stories/Story/Article/4337784/12-military-innovations-that-are-now-everyday-parts-of-society/

'Utah Data Center' (2025) Wikipedia - https://en.wikipedia.org/wiki/Utah_Data_Center

VIRTUE (2025) VITURE x Cyberpunk 2077 Luma Cyber XR Glasses - https://www.viture.com/cyberpunk2077?color=Jet+Black&size=Regular+(IPD+58-70mm)

Vox (2023) Snow Crash author Neal Stephenson predicted the metaverse. What does he see next? - https://www.vox.com/technology/2023/3/6/23627351/neal-stephenson-snow-crash-metaverse-goggles-movies-games-tv-podcast-peter-kafka-media-column

VRChat Inc. (2017) VRChat

W3Techs (2025) Usage statistics and market share of Cloudflare - https://w3techs.com/technologies/details/cn-cloudflare

Wang, Y. (2023) Regulations - drivers for mandating driver monitoring systems - https://www.idtechex.com/en/research-article/regulations-drivers-for-mandating-driver-monitoring-systems/30322

Wilcox, M. (2020) 21e8 Index explainer - https://www.youtube.com/watch?v=6HYdTtIoyts

Wilkins, A. et al. (2022) 'Visually sensitive seizures: an updated review by the Epilepsy Foundation'

World (2025) World ID - https://world.org/world-id

Xavier, H.S. (2024) The Web unpacked: a quantitative analysis of global Web usage - https://arxiv.org/html/2404.17095v2

Xerox (2021) DARPA awards PARC contract to accelerate learning of complex skillsets through artificial intelligence and augmented reality - https://www.news.xerox.com/news/darpa-awards-parc-contract-to-accelerate-learning-of-complex-skillsets-through-artificial-intelligence-and-augmented-reality

Yuwei (2025) Driver fatigue monitoring system - https://en.yuweitek.com/driver-fatigue-monitor-system.html

Zarraelli, P. (2025) Peter Thiel’s Misunderstood Vision - https://sfl.media/peter-thiel-isnt-anti-democracy-hes-post-democracy/

ZDNET (2016) NSA is so overwhelmed with data, it's no longer effective, says whistleblower - https://www.zdnet.com/article/nsa-whistleblower-overwhelmed-with-data-ineffective/

Zuboff, S. (2019) The age of surveillance capitalism: the fight for a human future at the new frontier of power